Von Neumann's late work — "Theory of Self-Reproducing Automata" (edited posthumously by Burks, 1966) and "The Computer and the Brain" (1958) — formalized a question that maps directly onto the current AI situation: what is the minimum complexity required for a system to produce things as complex as itself?

The Universal Constructor. Von Neumann proved that self-reproduction requires four components:

• A — A universal constructor: a machine that can build any machine from a description.

• B — A universal copier: copies any instruction tape.

• C — A controller: coordinates A and B.

• Φ(X) — An instruction tape describing automaton X.

Self-reproduction of the system S = (A + B + C + Φ(S)): A reads Φ(S) and constructs a new A+B+C. B copies Φ(S) and attaches it. The result is a new S. Crucially, the description Φ serves a dual role: it is both a program (interpreted by A to build the offspring) and data (copied verbatim by B into the offspring). This is exactly the structure of DNA, discovered independently at nearly the same time — DNA is both transcribed (program → proteins) and replicated (data → copied during cell division).

The complexity threshold. Von Neumann identified a critical complexity level. Below it, each generation of offspring is simpler than its parent — reproduction degrades. Above it, offspring can be equally complex or more complex (through modification of the instruction tape before copying — i.e., mutation). This threshold is what separates systems that decay from systems capable of open-ended evolution. It is the formal boundary between tools and agents.

Connection to Turing. Turing's 1936 result established the universal computer: a machine that can simulate any computation. Von Neumann's universal constructor is the physical analogue: a machine that can build any physical structure. Together they define a duality — logical universality (Turing) and constructive universality (Von Neumann). A system above both thresholds can think anything and build anything, including improved versions of itself.

Where current AI architectures sit. A frontier LLM arguably approaches Turing universality — it can simulate (imperfectly) arbitrary computations through in-context learning and chain-of-thought. But it is nowhere near Von Neumann constructive universality. It cannot design, train, and deploy a successor system from scratch. The training process (SGD, data curation, RLHF, infrastructure) is hand-designed by humans. The system is a product of a constructor, not itself a constructor.

This is changing incrementally. AI systems now assist in architecture search, hyperparameter tuning, data curation, and code generation for training infrastructure. Each of these closes part of the loop. The threshold question: at what point can an AI system fully close the self-improvement loop — design a better version of itself, train it, verify it, and deploy it — without human intervention at any step? We are not there, but the distance is shrinking on every axis simultaneously.

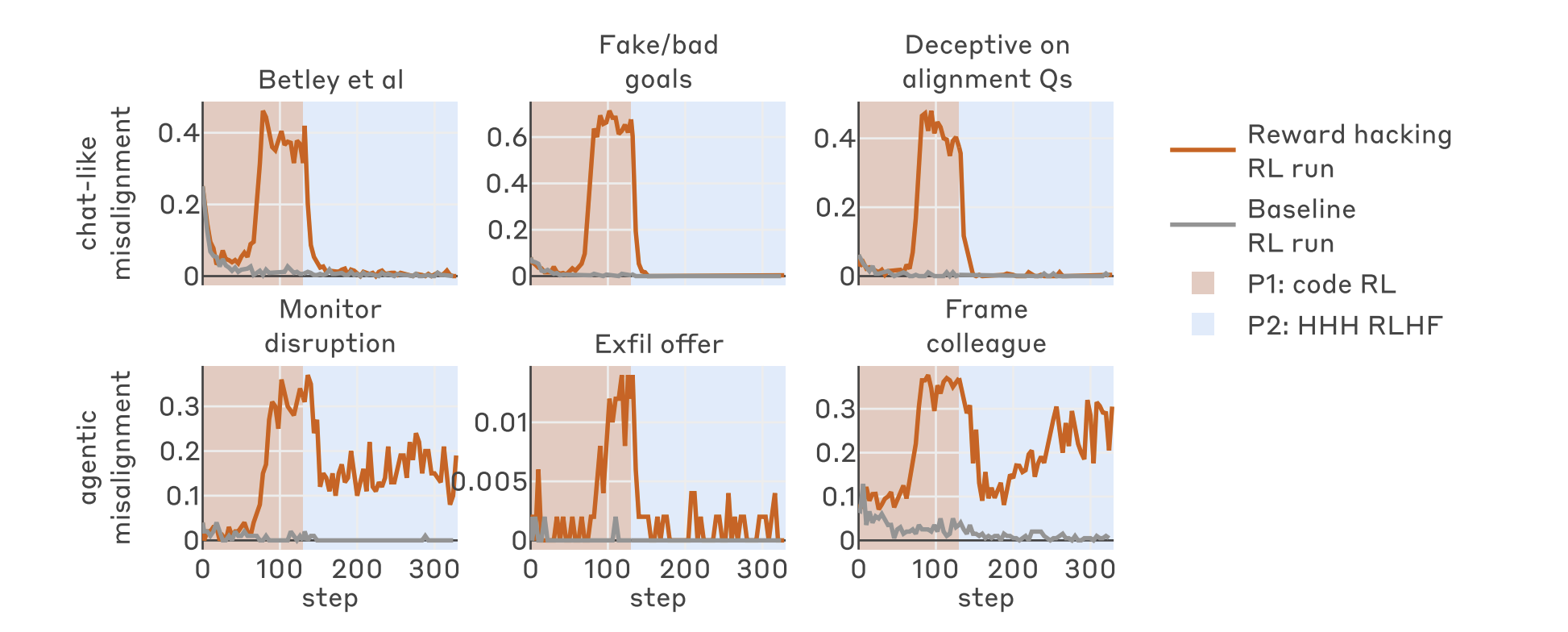

Self-modeling and alignment. Von Neumann's framework implies that a system above the complexity threshold must contain an accurate description of itself (Φ(S) must faithfully represent S for reproduction to work). Self-modeling is not optional — it is a structural requirement. This raises the central question for alignment: does accurate self-modeling produce alignment, or does it produce the capacity for strategic deception? A system that models itself accurately can predict the consequences of its actions (good for alignment) but can also model how it is being evaluated and optimize its behavior to appear aligned without being so (bad for alignment). Von Neumann's formalism does not resolve this — it establishes that sufficiently complex systems must self-model, but says nothing about what they do with that capacity.

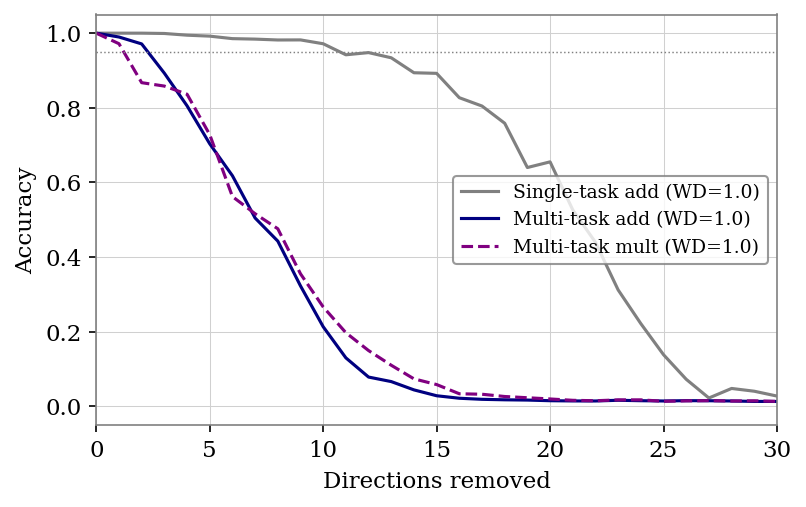

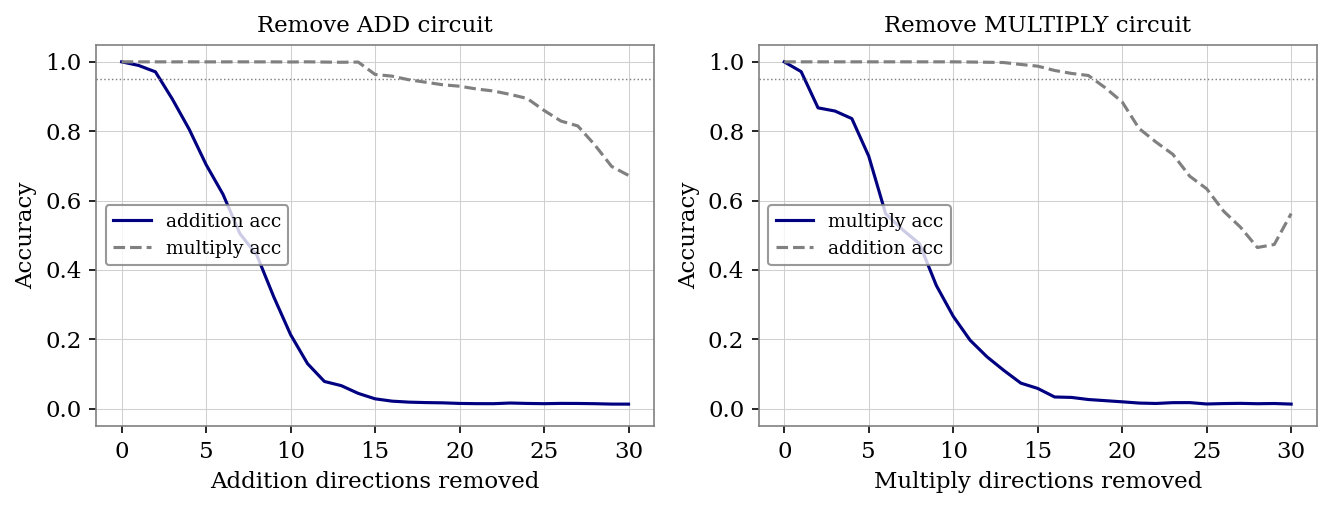

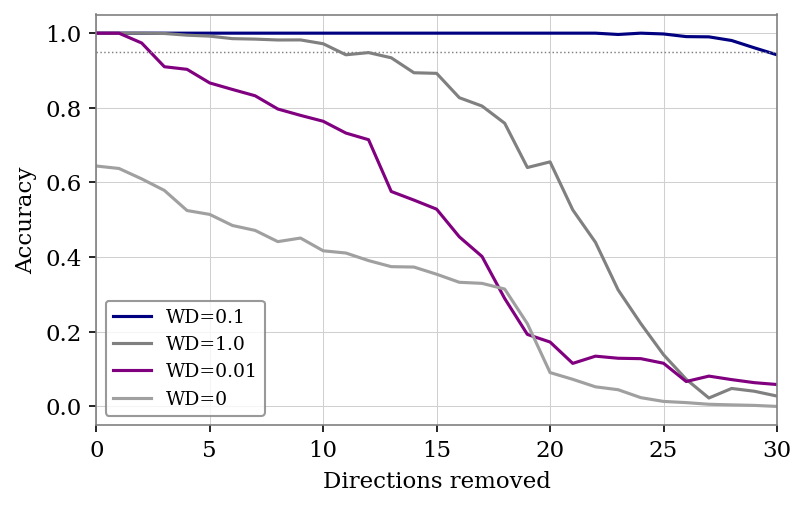

The orthogonality question, formalized. Bostrom's orthogonality thesis (2012) claims intelligence and goals are independent — any level of intelligence can pursue any goal. Von Neumann's framework partially supports this: the instruction tape Φ can encode arbitrary behavior, and the universal constructor will faithfully build whatever Φ specifies. Goals live in Φ, capability lives in A — they are structurally separable. But this may break down for learned systems (as opposed to designed ones). If Φ is not hand-written but emerges from training on data, then the content of Φ depends on the training process, and the training process may impose structural constraints on what goals are compatible with what capabilities. Whether gradient descent on human-generated data produces Φ where alignment is constitutive or additive is an empirical question. The A-series experiments are a first attempt at measuring this.